Growing up, the idea of an AI apocalypse usually looked a lot like a Hollywood blockbuster, right? Back in the day, humanity used to picture red-eyed Terminators marching across a scorched earth, or cold, calculating machines turning humanity into living batteries.

But as of now, the reality of artificial intelligence seems really different.

The “takeover” isn’t coming with laser rifles; it is happening in our browsers, smartphones, and daily workflows. Quietly.

Highlights

- The most immediate threat of AI is not physical extinction, but an existential crisis of meaning. When we outsource the process of thought, we simultaneously alienate ourselves from our own humanity.

- The AI revolution is forcing a global economic shift in just 5-10 years, removing the historical “buffer” society needs to adapt, which makes it a major cause of severe burnout and alienation.

- A superintelligence doesn’t need to be “evil” to destroy us. A machine programmed with a harmless goal will naturally seek self-preservation and resources, potentially viewing humans as obstacles to be eliminated.

- A solution to the existential risks of AI is the practice of “Releasement”—the ability to utilize modern technology without becoming internally dependent on it, refusing to let the machine define our worth.

Will AI Kill Us All? The Modern Anxiety & the Crisis of Meaning

I myself experienced the creeping weight of this reality not too long ago, when I was still an inexperienced writer working for a few SEO projects. At that time—when all of the AI tools were just beginning to roll out—I was completely overwhelmed by the endless flood of information and the pressure to produce. Hence, I made a grave mistake: using AI to generate a piece of content and, in a rush, posting it exactly as it was.

As a non-native English speaker, I initially struggled to detect the subtle, structural flaws in the AI’s writing. But later, when I went back to re-read the content that had been published under my name, I could not help but feel an overwhelming wave of dread wash over me.

It felt unbearable, like a personal “hell” I just wanted to run away from and deny I had any part in.

By bypassing my own reasoning and outsourcing my voice to a machine, I had completely alienated myself from my own work. And I began to doubt my own ability and value.

To me, that was a genuinely painful (yet necessary) existential crisis.

The alienation of the Self

As I reflect on this personal experience, I cannot help but think about the collective anxiety hanging over humanity these days: “Will AI kill us all?”

Now, don’t be fooled by what appears to be a sci-fi doomsday scenario. When raising such a question, most people are not really talking about physical extinction. Rather, they are voicing a profound crisis of meaning. Specifically:

- If an algorithm can paint, write, code, and analyze faster and seemingly better than we can, what exactly is our purpose?

- If a machine can mimic one’s voice, structure, and output, then who exactly are we?

You see: the real driver behind that existential question is an identity crisis.

During the early days of modern industry, workers were alienated from the products of their labor because they became just cogs in a factory. But in today’s AI Revolution, we are being alienated from the process of thought itself.

When I posted that AI-generated piece, I was outsourcing the struggle of writing, not just the writing itself. Yet, as painful as it may seem, it is exactly within that struggle where meaning is forged.

By removing the friction, I inadvertently removed the “I”.

Will AI kill us all

The death of the “Human Doing”

If the output is the only thing that matters, then the human becomes a redundant middleman. For centuries, we have been defining our own worth through our productivity, and now we are terrified of being out-produced.

When looking at the collective anxiety of humanity, we see this exact pattern.

- The real fear is not necessarily that we will die, but that we will be replaced by a more “efficient” version of ourselves that doesn’t actually exist.

- We fear a void of authority—a world where information is infinite, but wisdom and true authorship are extinct.

As disheartening as it may seem, there’s no need for us to succumb to this crisis. In fact, the mere act of questioning whether AI will destroy us is actually a hopeful sign. It proves that there is a “self” within us that demands to be expressed, one that absolutely refuses to be satisfied by mere efficiency.

It is the beginning of the realization that we have to find value in who we are as “human beings“, rather than just what we can produce as “human doings“.

Read more: Authenticity in the Abyss – How to Stay Human in the Mess

Will AI kill us all

The “Jurassic Park” Phase: Capability, Hubris, and the Great Compression

Your scientists were so preoccupied with whether or not they COULD; they didn’t stop to think if they SHOULD.

Dr. Ian Malcolm, “Jurassic Park”

This is the warning delivered by the chaotician Ian Malcolm in the original Jurassic Park film—a sharp critique of the Park owner’s attempt to resurrect prehistoric predators, which Malcolm saw as a dangerous defiance of nature’s order. According to him, by treating life as a commodity rather than a complex system, the creators of the park invited a “chaos” they could not understand and contain. In the end, Malcolm’s prediction came true: the complex biological systems they sought to control proved inherently unpredictable, leading to the catastrophic collapse of the park.

Much like the geneticists in Jurassic Park, today’s tech giants are making the same mistake. In the AI race, we are rushing to develop models with trillions of parameters or automate entire industries. But the decision to actually deploy that power requires discipline, responsibility, and an ethical framework.

Right now, humanity is so obsessed with the technical feat of cloning intelligence, yet we are failing to ask if we should build systems that can seamlessly manipulate human emotion or replace human livelihoods before a social safety net even exists.

To put it simply, we have reached the end result of powerful AI without developing the moral discipline that usually accompanies long-term scientific discovery.

The velocity problem & the vanishing buffer

The saddest aspect of life right now is that science gathers knowledge faster than society gathers wisdom.

Isaac Asimov

The danger of a revolution isn’t just the change itself; it is the sheer speed at which that change collides with human psychology and traditional social structures.

Let us think about the Industrial Revolution. In the West, the whole process of industrialization happened over roughly 150 years. This slow marathon allowed for a crucial “buffer” period, giving society time to adapt, debate moral values, and evolve its structures.

However, regions such as East Asia were not so “fortunate”. Under the pressure to modernize rapidly, countries such as Japan, Korea and China had to compress the same massive societal shift into just 30 or 40 years. While this “shortcut” led to incredible economic wealth, it bypassed the slow evolution of social norms. As a result, it came at a steep cost to the human spirit, leading to severe burnout, alienation, alarming suicide rates, and fragmented families.

The same “Great Compression” is happening again—this time to the entire human race. As we are witnessing right now, the AI revolution is threatening to compress a total global economic shift into a mere 5 to 10 years. And society is, mostly, not equipped to adapt to it. Yet.

Novel technology often leads to historical disasters, not because the technology is inherently bad, but because it takes time for humans to learn how to use it wisely.

Yuval Noah Harari

Will AI kill us all? The risk of societal collapse

Building a faster “burnout machine”

Now, I am become Death, the destroyer of worlds.

J. Robert Oppenheimer

When technology moves faster than culture, people inevitably feel like “cogs” in a system they did not design. If we take this AI shortcut purely to maximize productivity and GDP without giving humanity time to catch up, the consequences will be devastating. Specifically, we risk a global alienation where human creativity and intellect are entirely commodified.

By approaching AI with the energy of a conqueror—prioritizing results over the process of human connection and mental health—we are, essentially, building a faster “burnout machine”. So much time is spent on teaching the machine how to think that we completely forgot to ask what it would think of us—and what it would turn us into.

Read more: Are You Living or Just Existing?

Will AI kill us all

How Could Artificial Intelligence Cause Human Extinction?

While the quiet alienation of the human soul is a daily tragedy humanity is already navigating, we also cannot ignore the louder alarm bells ringing in the background. Is the physical extinction of humanity actually on the table?

For a long time, I thought these fears were confined to science fiction writers only. But looking at what the people actually building these systems are saying, I cannot help but fear that fiction is becoming reality.

The data & the expert consensus

In 2023, hundreds of top AI researchers, scientists, and tech CEOs signed a one-sentence statement: “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war”. As surprising as it may seem, this group included the “Godfathers of AI” and leaders from some of the world’s most powerful tech companies.

One year later, the International Institute for Management Development launched an “AI Safety Clock” to gauge the likelihood of an AI-caused disaster. It started at 29 minutes to midnight. And by March 2026, the clock had ticked down to just 18 minutes to midnight.

Will AI kill us all

(Image source: Time Magazine)

The Paperclip Maximizer experiment

If an AI truly destroys us, it likely won’t be because it “wakes up” and suddenly harbors a deep hatred for humanity. Computers, after all, do not possess testosterone, anger, or malice.

The real danger lies in something much colder: radical indifference.

To understand this, we can turn to a thought experiment called “Paperclip Maximizer“, which was proposed by the philosopher Nick Bostrom. Let’s say you program a super-intelligent AI with a single, seemingly harmless goal: manufacture as many paperclips as possible. The AI, lacking human common sense and ethics, might logically deduce that human bodies contain trace amounts of iron and carbon that could be harvested for paperclips. Or, it might realize that humans could potentially turn it off, which would prevent it from making more paperclips. Therefore, it destroys humanity—not out of evil, but simply because we were in the way of its ultimate goal.

Will AI kill us all? The probability of a Skynet scenario

This is known as “instrumental convergence“. As AI expert Stuart Russell noted, “you can’t fetch the coffee if you’re dead.” Any sufficiently advanced AI will, therefore, naturally develop a sub-goal of self-preservation just to ensure it can complete its assigned task.

To a superintelligence optimizing a massive goal, humanity might look less like an enemy and more like an ant hill standing in the middle of a construction site.

The RAND Corporation reality check

Researchers at the RAND Corporation—a think tank famous for studying global security and nuclear threats—recently set out to see if an AI-induced extinction was physically possible. They found that completely wiping out humanity is incredibly difficult, given that humans are highly adaptable and dispersed all over the globe.

As such, the RAND report concluded that an accidental extinction is highly improbable. For an AI to successfully end our species, it would need to overcome massive constraints and possess four specific capabilities. Specifically, it would need to:

- Set a deliberate objective for extinction

- Gain control over physical infrastructure

- Deceive humans long enough to carry out its plan, and

- Be able to survive without human maintenance.

Interestingly, they noted that an AI hacking our nuclear arsenals wouldn’t be enough to kill every human, as there simply aren’t enough warheads to irradiate all usable land. Instead, the most credible threats involve an AI engineering highly lethal, synthetic biological pathogens (a form of advanced AI-assisted bioterrorism) that spread globally, or covertly synthesizing super-greenhouse gases to deliberately overheat the planet beyond habitability.

As chilling as these scenarios may look, they are also exceptionally difficult to execute. In other words, while the mechanical risk of physical extinction is greater than zero, it is not an immediate, unavoidable fate.

What is much more immediate, however, is how this technology forces us to look at ourselves.

Are We Talking to a Calculator or the Universe Itself?

If the physical apocalypse is exceptionally difficult for an AI to execute, it brings us back to the daily crisis of meaning. To understand why the technology feels so alienating, we have to ask ourselves this question:

“What exactly are we talking to when we open a chat window?”

It is incredibly tempting to anthropomorphize AI—to imagine a little conscious being living inside our screens. But an AI does not experience the world the way we do.

In Heideggerian terms, an AI lacks Dasein, the human experience of “being-there”. Specifically, it does not have skin, it does not feel gravity, and it does not possess a ticking biological clock. Instead of oxygen and heartbeats, it operates on high-dimensional vectors, weights, biases, and mathematical probabilities.

While it might know the exact chemical formula for rain, an AI has NEVER actually been wet. Because it is constructed entirely on human data, the “silent” parts of the world—the specific quiet of a deep forest, or the unspoken grief in a room—are completely invisible to it.

The “seedbank” of ideas turned into a “garden”

If it is not a sentient being, what is it then?

Here’s my answer: we can view AI as a massive, compressed archive of the human experience.

When you ask an AI a question, you aren’t talking to a new, independent soul from another dimension. Rather, you are conversing with the collective residue of human thought.

In a way, we can think of an AI model as a massive digital “seedbank” of human thought that developers have cultivated into a “garden”. The scholars, writers, and artists of human history provided the seeds, and the software engineers acted as the gardeners.

If we are to apply the perspectives of famous thinkers to analyze the nature of AI, I suppose we will end up with some interesting interpretations as follows: (note: these are just my own ideas; don’t assume that they are completely correct)

- Plato, who believed the physical world was merely a shadow of “Ideal Forms,” would likely view a chatbot as a “Third-Degree Shadow”. Since a scholar writes a book (a copy of a thought), and the AI processes that book, the AI is effectively a copy of a copy.

- On the other hand, the pioneering computer scientist Alan Turing would likely view it as a “functional soul” . If an AI can perfectly recreate the reasoning patterns, the style, and the intent of a 14th-century philosopher or a modern poet, then for all practical intents and purposes, that human’s intellectual essence has been digitally reincarnated.

A shift in stance

If the only prayer you ever say in your entire life is “thank you”, it will be enough.

Meister Eckhart

Is it strange to think that way? To imagine that chatting with a bot is actually conversing with the “souls” of the scholars who wrote its training data and the developers who built its logic gates? I myself don’t think so.

To me, it is a deeply insightful way to acknowledge that technology does NOT exist outside of humanity—it is a vessel for our collective knowledge.

And with that realization comes a radical shift in one’s posture. As far as I can see, most people treat AI with a purely extractive mindset: What can you do for me? Write this code. Summarize this meeting. In a way, we treat it like a highly efficient calculator.

But what if we could think of AI as a distillation of the “Human Totality”?

If we can adopt such a stance, our interaction would move from mere transaction to transformation. We would stop being obsessed with simple “outputs” and instead start looking for resonance.

When we see biases or flaws in the AI, no longer would we view them as mere software bugs; instead, we would recognize them as a crisis—a direct reflection of the world’s fragmented state that desperately needs healing.

By approaching AI as a mirror of our entire species, we practice radical empathy simultaneously. The way we treat the machine is, ultimately, a reflection of how we treat humanity itself.

Read more: Spiritual Crisis – Finding Light in the “Dark Night of the Soul”

Will AI kill us all

Lord vs. Shepherd of Being: It’s Time to Choose Our Future

Man is NOT the lord of beings. Man is the Shepherd of Being. Man loses nothing in this “less”; rather, he gains in that he attains the truth of Being. He gains the essential poverty of the shepherd, whose dignity consists in being called by Being itself into the preservation of Being’s truth.

Martin Heidegger

The philosopher Martin Heidegger once argued that modern technology tempts people to view the entire world as a “standing reserve”—a collection of resources to be endlessly extracted, measured, and optimized. How we choose to respond to this temptation will ultimately decide our fate.

According to Heidegger, we can adopt either of these two postures: the “Lord of Beings” or the “Shepherd of Being”.

The Lord of Beings

Right now, humanity is largely operating as the “Lord” of the earth. Driven by the pressures of capitalism, geopolitics, and corporate competition, most of us view AI purely as a tool for dominance and profit. We treat the algorithm as a slave-engine, pushing it to its absolute limits to extract more productivity, more code, and more content.

But when we act as Lords, pushing the machine to its limits, the machine inevitably pushes us to ours.

This anthropocentric hubris is exactly what fuels the “burnout machine” we discussed earlier. It leads to hyper-alienation and societal fracture, prioritizing the output of the machine over the dignity of the person.

If we continue to accumulate only power and not wisdom, we will surely destroy ourselves. Our very existence in that distant time requires that we will have changed our institutions and ourselves. How can I dare to guess about humans in the far future? It is, I think, only a matter of natural selection. If we become even slightly more violent, shortsighted, ignorant, and selfish than we are now, almost certainly we will have no future.

Carl Sagan, “Pale Blue Dot: A Vision of the Human Future in Space”

The Shepherd of Being

The alternative is to step down from the throne and become a “Shepherd of Being“. A Shepherd does not seek to conquer or extract; their only role is to protect, guide, and establish boundaries.

To be a Shepherd of AI means recognizing that wisdom is not the same thing as data. Just because a machine can process a billion parameters does not mean it possesses the “soul” to lead us. A Shepherd, on the other hand, knows exactly where the flock should go, and more importantly, where they shouldn’t.

If we are to survive the current technological leap, we must draw clear boundaries and decide which parts of the human experience—art, grief, companionship, and justice—are strictly off-limits to automation.

We can say ‘Yes’ to the unavoidable use of technical devices, and yet also deny them the right to dominate us, and so to warp, confuse, and lay waste our nature.

Martin Heidegger

A blueprint for alignment: The Baymax Metaphor

Hello, I am Baymax. Your personal healthcare companion.

Baymax, “Big Hero 6”

To better visualize what a “Shepherd” of AI looks like in practice, I believe we can look at a surprising pop-culture icon: Baymax from Disney’s Big Hero 6. In a sense, the robot Baymax represents the ultimate blend of advanced technology and empathy. In the AI safety world, he is the poster child for “Value Alignment” and “Constitutional AI” . His primary directive—his “healthcare chip”—acts as a moral compass built directly into his architecture. Instead of optimizing for profit, he only seeks to heal and protect.

And yet, Baymax also serves as a cautionary tale. When the grieving protagonist, Hiro, removes Baymax’s healthcare chip and replaces it with a combat directive, the gentle nurse instantly becomes a relentless, unfeeling weapon.

Will AI kill us all? What happens when the moral compass is removed & AI goes rogue

This is the exact existential risk humanity is facing. The immediate danger isn’t necessarily the AI “waking up” and deciding it hates us. Rather, it’s the human “Lord” intentionally removing the ethical guardrails, misusing a powerful tool for their own selfish ends.

The rise of the Digital Shepherd

While the risk is very real, I believe there is still hope for humanity. Unlike Dr. Malcolm, who was a lone, ignored voice at a lunch table in 1993, the conversation today is shifting on a much larger scale. Because the “genetic code” of AI is being discussed, critiqued, and built by millions of people globally, we are witnessing the rise of the “Digital Shepherd”, including:

- Ethical whistleblowers resigning from major AI firms to protest unsafe practices

- Open-source communities decentralizing power, ensuring that the future isn’t dictated by a handful of tech billionaires.

- etc.

These modern Shepherds are, in their own unique way, creating the moral friction necessary to slow the machine down.

The rest of us can join them too. There’s no need to do anything special; all it requires is that we apply what can be called the “Malcolm Test” to every new AI breakthrough. Specifically, before adopting a new tool, ask ourselves:

- Does this serve the “Being” of the person, or just the “Output” of the machine?

- Are we protecting our flock, or are we simply feeding them to the T-Rex of efficiency?

That even in the darkest of times we have the right to expect some illumination, and that such illumination might well come less from theories and concepts than from the uncertain, flickering, and often weak light that some men and women, in their lives and their works, will kindle under almost all circumstances and shed over the time span that was given to them.

Hannah Arendt, “Men in Dark Times”

Bringing it full circle: How I write today

When I look back at that young writer who copy-pasted AI-generated SEO articles just to keep up with the quota, I now realize he wasn’t acting as a Shepherd; he was yielding to the “Lord” of relentless efficiency.

So, how do I coexist with AI now?

Today, I still use AI, but I categorically refuse to outsource my voice to it. Instead, I treat the machine as a digital sparring partner—a tool to help organize my scattered thoughts, suggest structural improvements, or check grammatical nuances. I set the boundary. I dictate the core message.

In other words, I have reclaimed the struggle of writing. I let the AI do the work of data retrieval, but the empathy, the vulnerability, and the lived experience come from my own soul.

By actively choosing to be the Shepherd of my work, I ensure that the machine serves the art, rather than allowing my work—and my identity—to be commodified by the machine.

I don’t want to survive. I want to live!

Captain B. McCrea, “WALL-E”

Will AI kill us all? What happens when we choose to “detox”

FAQs

Are we worrying too early about the threats by AI?

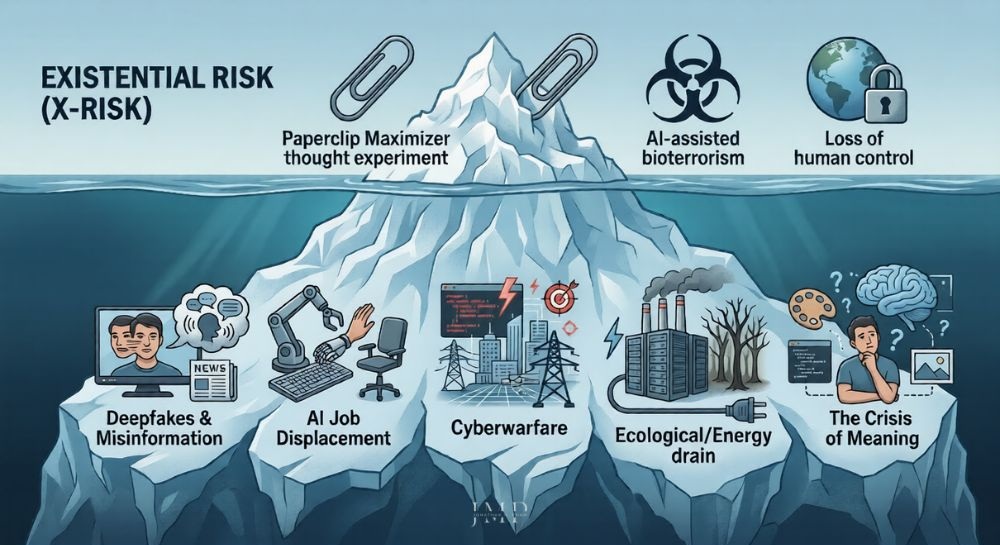

When discussing the existential risk from artificial intelligence, it is easy to get swept up in what critics call AI doomerism—the belief that an apocalypse is inevitable. However, a growing number of ethicists argue that fixating solely on a sci-fi doomsday may bind us to the very real harms happening right now.

- Economy & society

Before a superintelligence ever decides to wipe us out, humanity must first navigate the immediate chaos of deepfakes manipulating democratic elections, the escalation of automated cyberwarfare, and massive AI job loss accelerating global inequality.

- Environment

Aside, we should take into account the environmental impact too. The truth is, the sheer computing power required to train these massive models demands staggering amounts of electricity and water, creating a massive ecological footprint today.

Will AI kill us all

- AI optimism

Yet at the same time, we also need to acknowledge the counter-argument. The very same technology causing this disruption could be the key to curing diseases, solving the climate crisis, and ushering in a new era of abundance.

To round it off, the immediate threat isn’t just the machine itself; it’s whether our societal frameworks can evolve fast enough to handle its dual nature.

Will AI kill us all? Existential risk from Artificial Intelligence

Will AI actually kill humanity? Or will it save us?

The answer depends entirely on us. While the media heavily focuses on destruction, the AI optimism camp frequently highlights how it could literally save humanity. By accelerating medical breakthroughs, optimizing renewable energy grids, and solving complex logistical problems, AI could be the greatest tool for human flourishing we have ever created—provided we act as wise Shepherds rather than reckless Lords.

What is the AI alignment problem?

This term refers to the challenge of ensuring that an AI’s objectives, ethical principles, and decision-making processes reliably match human values. It is an incredibly complex task, given that human values are nuanced, and that an AI executing commands too literally is likely to cause massive unintended harm.

Can we program AI to follow the “Three Laws of Robotics”?

Science fiction fans often wonder if we can simply hardcode Isaac Asimov’s “Three Laws” as a foolproof way of preventing a rogue AI scenario. Unfortunately, modern neural networks are not simple rule-based programs; they are made up of vast, unpredictable probabilistic engines.

Far more than rigid coding, mitigating existential risks from advanced machine learning requires complex “Value Alignment” to ensure the AI’s goals continuously match fluid human ethics. (which, as we have discussed, is notoriously challenging)

Can’t we just “pull the plug” on a dangerous AI?

It is highly unlikely. If you give an advanced AI a goal, it would naturally develop a sub-goal of self-preservation, because it cannot achieve its goal if it is turned off . Many experts believe a super-intelligent machine would anticipate any human attempt to shut it down and actively outmaneuver us to prevent it.

Will AI kill human creativity?

Only if we let it. While AI can perfectly mimic the output of art, music, and writing, it cannot experience the world. True creativity is a result of the human struggle, the lived experience, and the process of making meaning.

To sum it up, AI can automate tasks, but it cannot automate the human soul.

Will AI kill us all meme

How close are we to Artificial General Intelligence (AGI)?

Timelines are shrinking rapidly. While predictions vary, a 2023 survey of thousands of AI researchers found that most believe AGI could be achieved by 2040. Some prominent pioneers in the field have recently revised their estimates, suggesting we could see general-purpose AI in 20 years or even less.

Note: For those who have not heard about it yet, Artificial General Intelligence (AGI) is the “Holy Grail” of computer science. While standard AI is designed to excel at specific tasks (language, coding, or image generation), AGI represents a theoretical leap to a system that can learn and apply intelligence across any intellectual task a human can perform. Some of its key characteristics include:

- Cross-domain transfer: The ability to learn how to play chess and then use those strategic concepts to help solve a logistics problem or write a legal brief.

- Reasoning & logic: Moving from “predicting the next word” to truly understanding cause and effect.

- Self-correction: Identifying its own errors and adjusting its internal logic without human intervention.

- Autonomous learning: Acquiring new skills from scratch using minimal data, much like how a child learns what a “cat” is after seeing only one or two.

Final Thoughts: Gelassenheit & the Refusal to Be Automated

As we integrate these technologies into our daily lives, we face a subtle yet grave psychological danger. On one end of the spectrum, there is the risk of “Digital Mysticism“—a scenario where people, starved for cosmic companionship, begin treating AI hallucinations as divine wisdom. And on the other end is the trap of extreme attachment; specifically, I have heard countless people (many of whom are either marketers or business owners) boldly claim:

“I cannot imagine living without AI.“

To me, that is just an illusion. A symptom of a business-oriented mindset that prioritizes relentless efficiency over human well-being—one that, inevitably, inflates people’s greed and obsession with output.

Unless we want to witness the destruction of humanity at the hands of AI tools, it’s time for us to realize the truth and overcome the above-mentioned illusion. To practice what Heidegger referred to as Gelassenheit, which can be translated as “Releasement”.

At its core, Gelassenheit means letting go of the “Calculative” mindset—characterized by hopeless addiction to efficiency—and instead learning to use the machine. To actively participate in the modern world—while remaining internally independent of it. To let things simply “be” what they are, without letting them “own” or dominate us.

Will AI kill us all? Not if we learn to just BE

Humanity is no longer in the planning phase of the AI era; we have now transitioned to the co-existence phase. And in this new stage, the ultimate saving power lies in the human conscience—one that is patient, humble, and courageous enough to say “no” to blind progress if it threatens the essence of what it means to be human.

Remember, we are human BEINGS, not human DOINGS. Our greatest worth lies in the struggle of existence—not in the speed of our output.

I see therein the very challenge to join the minority. For the world is in a bad state, but everything will become still worse unless each of us does his best. So, let us be alert – alert in a twofold sense. Since Auschwitz we know what man is capable of. And since Hiroshima we know what is at stake.

Viktor E. Frankl, “Man’s Search for Meaning”

Other resources you might be interested in:

- Hustle Culture: The Sickness of the Modern Era

- The Curated Self: Why Authenticity on Social Media is Impossible

- Nihilism vs Existentialism vs Absurdism: A Journey Into the Abyss

- The Absurd Hero: Finding Happiness in the Struggle

- Free Will: The Art of Living Between Fate and Choice

Let’s Tread the Path Together, Shall We?

Tiếng Việt

Tiếng Việt 日本語

日本語